The perimeter-based security model that most governments and institutions built their digital defenses around rested on a single assumption that held long enough to become doctrine. Internal networks were trusted. External traffic was suspect. The job of the security infrastructure was to keep the outside out, and everything inside the boundary was, by definition, safe enough to work with. That assumption survived decades of incremental threat evolution because the actors probing the boundary were still fundamentally constrained by the same physics as the defenders. They could compromise one system at a time, move laterally through a network at human pace, and leave traces that forensic analysis could eventually reconstruct into a coherent picture of what happened and why.

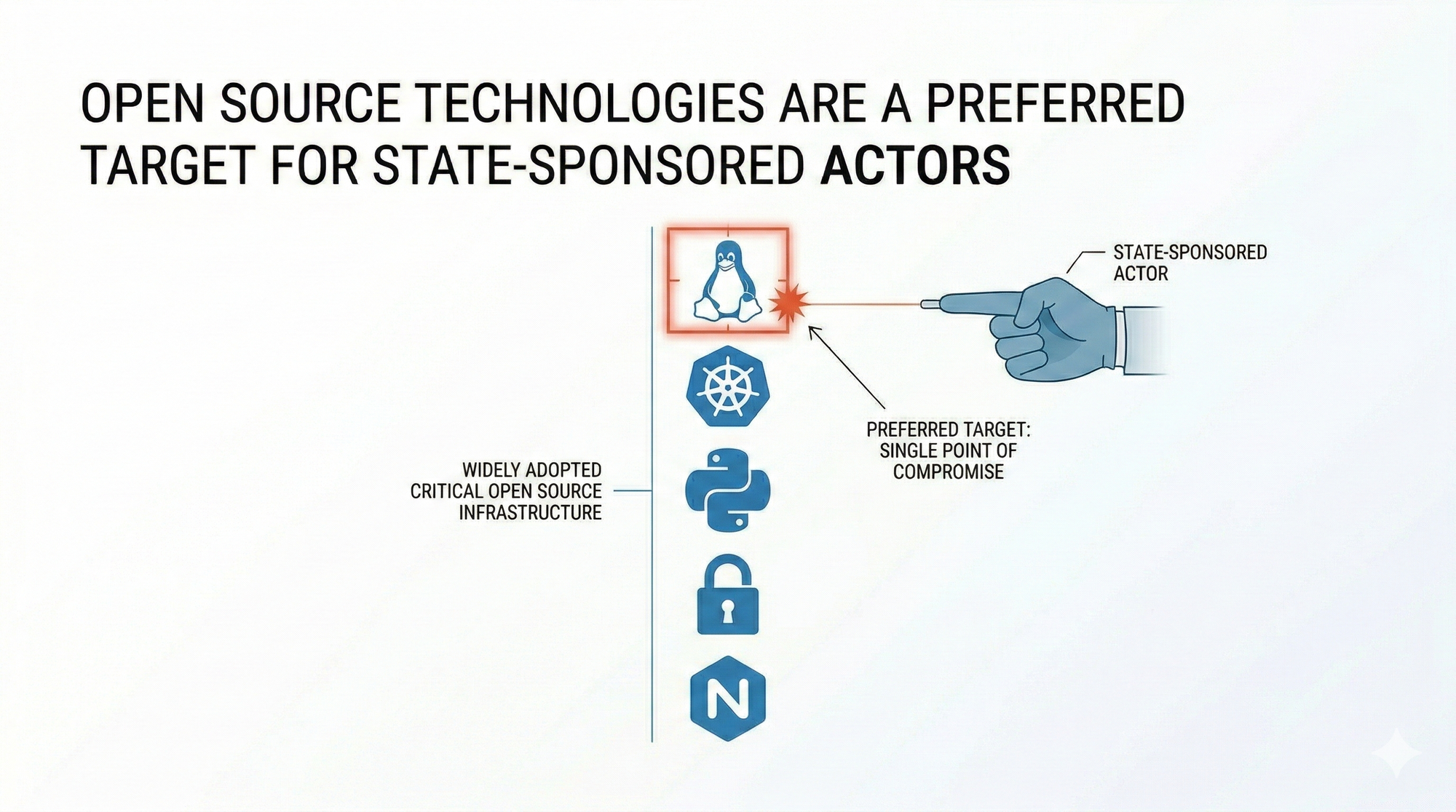

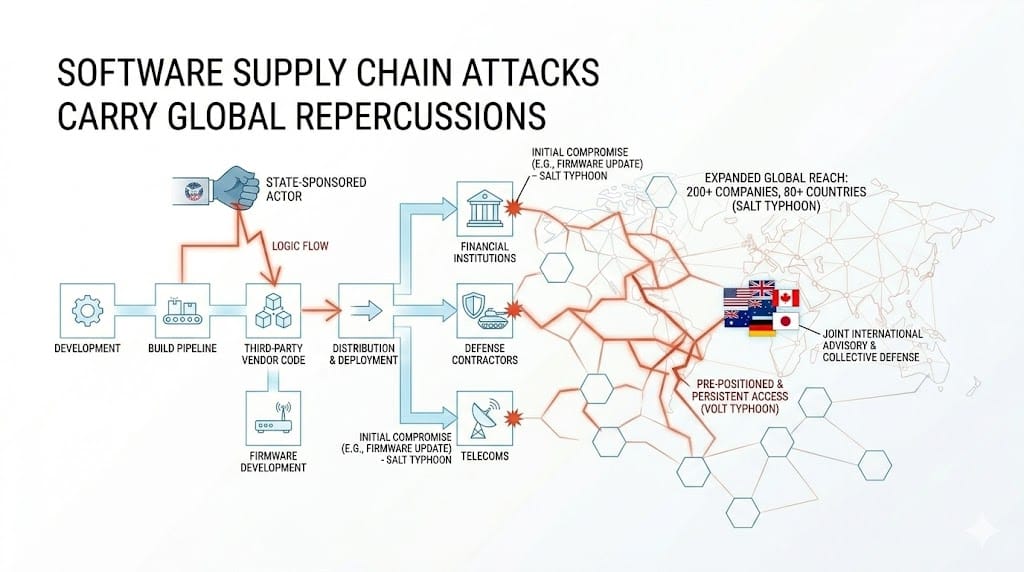

Artificial intelligence has changed both sides of that equation simultaneously, and the security architecture that most organisations and government institutions are running today was not designed for the environment that change has produced. AI amplifies the attacker's capability at every layer. It automates phishing campaigns sophisticated enough to defeat users trained to spot them. It accelerates the discovery of vulnerabilities in target systems. And it enables nation-state actors and criminal organisations alike to operate at a scale and a speed that manual threat analysis cannot track in real time. But AI has also transformed the defender's infrastructure in ways that perimeter security was never designed to protect, and that transformation is where the governance gap opens up.

Why Traditional Defenses Fail for AI Systems

Classic network-perimeter controls, firewalls, intrusion detection systems, and network data loss prevention tools, were built to detect threats by recognising static, predictable patterns in network traffic. AI systems break every assumption those controls depend on. AI network traffic is routinely encrypted, which removes the visibility that network-based controls require to function. AI operates at the data and application layers rather than the network layer, which means the controls designed to inspect traffic cannot differentiate between a legitimate query and a data exfiltration attempt when both travel through the same encrypted channel. And AI activity is fundamentally dynamic. Unlike deterministic applications that produce the same output for the same input every time, AI generates variable outcomes, which means signature-based defenses have no stable pattern to match against.

The consequence is architectural rather than incremental. Protecting AI systems and the data they operate on requires moving from a network-centric security model to an asset-centric and data-centric one, where protection travels with the data regardless of where it lives and what infrastructure it passes through. Zero Trust is the framework that operationalises that shift, and the three principles it rests on, verify explicitly, use least privilege access, and assume breach, are precisely the principles that AI's threat profile demands.

The Non-Human Identity Problem No Framework Has Solved

Every serious discussion of Zero Trust implementation eventually arrives at identity as the new perimeter, and that framing is correct as far as it goes. But identity management for AI agents introduces a category of problem that existing frameworks, including NIST 800-207 and the frameworks derived from it, were not written to address because autonomous agents were not a material component of enterprise or government infrastructure when those frameworks were specified.

An AI agent acting inside a government system, a financial institution, or a critical infrastructure operator is a non-human identity that can be instantiated at volume, cloned across environments, and compromised at the model layer through poisoned training data or adversarial manipulation before it ever authenticates against a single access control gate. It presents valid credentials because they are genuine. It passes verification checks because its behaviour does not deviate from its established baseline. The compromise is in its reasoning, not its identity, and the identity layer has no mechanism to detect it.

Zero Trust for AI has to extend the verify explicitly principle downward through the stack to model provenance. Where a model was trained, on what data, by whom, and under what integrity controls are questions that belong in the security review of any AI deployment with access to sensitive information. Just-in-time and just-enough-access controls have to apply to agents as rigorously as they apply to human users, with ephemeral task-scoped credentials that expire when the specific workflow step they were issued for concludes and cannot be reused by a subsequent agent in the chain. And immutable audit logs that capture not just what an agent did but why it made specific decisions are the minimum requirement for any deployment where accountability has legal or diplomatic consequences.

The Regulatory Landscape and the Governance Gap

The absence of unified global AI security regulations has created a situation where institutions that operate across jurisdictions are navigating a patchwork of frameworks that were developed independently and do not always align with each other in their requirements. The NIST AI Risk Management Framework provides a comprehensive structure for identifying and mitigating AI-related risk. The Cloud Security Alliance's AI Controls Matrix establishes a set of security controls specific to AI deployments. The EU AI Act introduces binding obligations for high-risk AI systems deployed within European jurisdiction, with implications for any organisation whose systems interact with EU data or EU citizens regardless of where those systems are hosted.

For government institutions and international organisations, the practical consequence of operating in this fragmented regulatory environment is that Zero Trust adoption has become one of the few available mechanisms for demonstrating due diligence across multiple jurisdictions simultaneously. An institution that can document continuous verification of all identities including AI agents, enforced least-privilege access at the task level, and assumed breach posture with active monitoring and response capabilities has built a security architecture that satisfies the intent of most existing frameworks even where the letter of those frameworks has not caught up with the realities of agentic AI deployment.

The shared responsibility model that governs AI security adds another layer of complexity for institutions procuring AI capabilities from commercial providers. Depending on whether the deployment is Infrastructure as a Service, Platform as a Service, or Software as a Service, the boundary between what the provider secures and what the institution must secure itself shifts significantly. An institution that deploys AI on its own infrastructure carries the full burden of model safety, data governance, and application security. An institution using a managed AI service shares that burden with the provider but retains responsibility for data access controls, identity management, and how the service is used. Understanding where that boundary sits and building the institution's security controls around it is not a technical detail. It is a governance decision with legal and diplomatic implications that procurement officers and policymakers need to be equipped to make.

AI as Both the Threat and the Defense

The relationship between AI and Zero Trust is not one-directional, and treating it as purely a threat to be managed misses the strategic opportunity. AI is simultaneously the most significant new attack surface in modern security architecture and the most capable tool available for operationalising the continuous verification and adaptive access controls that Zero Trust requires at scale. Behavioural analytics that can establish normal baselines and surface anomalies across millions of identity interactions, automated threat detection that can identify and isolate a compromised agent before it propagates laterally through a system, and data classification capabilities that can govern what information AI systems can access based on sensitivity and context, all depend on AI to function at the speed and volume that modern infrastructure demands. Human analysts cannot perform these functions at the scale required. AI can, and the institutions that deploy it defensively while governing it through Zero Trust principles are the ones building the resilience that the current threat environment requires.

What This Means for the International Order

The geopolitical dimension of this challenge is not a projection. It is the present state of the international cybersecurity landscape. Governments are already using AI to amplify existing attack techniques at a scale and a sophistication that make the distinction between a criminal campaign and a state-sponsored one increasingly difficult to draw from the outside. Enterprise and government data has become a strategic asset that nation-state actors target for financial gain, leverage in diplomatic negotiations, and intelligence advantage. And the AI systems that process, analyse, and generate decisions from that data are now attack surfaces in their own right, not just conduits for the data they handle.

The institutions that will navigate this landscape most effectively are the ones that have accepted that the perimeter no longer exists in any meaningful sense, that the principal making access requests inside their systems is no longer reliably human, and that the governance framework adequate for a world of deterministic applications is not adequate for a world of autonomous agents operating across borders and jurisdictions at machine speed. Zero Trust for AI is not a product to be procured or a checklist to be completed. It is an architectural posture and a governance commitment that has to be built into how institutions deploy AI from the first decision rather than retrofitted after the first incident makes the gap visible. And in an international environment where the consequences of getting it wrong extend well beyond any single organisation's network boundary, that commitment is not optional.