Threat actors have repeatedly demonstrated that the most consequential attack surface in containerized infrastructure is not the moment of vulnerability discovery but the weeks that follow it, when findings sit inside security reports that no developer has the context, the time, or the tooling to act on. That window, between detection and remediation, is not a workflow inconvenience. It is a structural exposure at the infrastructure layer of organizations running financial systems, healthcare platforms, and critical communications networks, and it persists not because the detection tooling has failed but because nothing has been built to close what comes after it.

Advait Patel, Docker Captain and Senior Site Reliability Engineer at Broadcom encountered this problem not as an abstract policy concern but as a live production failure.

In late 2024, while managing multi-tenant Kubernetes clusters running hundreds of microservices, his team identified a potential data exposure incident rooted in a vulnerable base image that had been flagged by their scanner weeks earlier. The finding had not been acted upon because it was item 31 in a 237-CVE report spanning 47 pages, most of whose entries were either irrelevant to the team's specific deployment context or indistinguishable from noise without a dedicated security analyst to interpret them. The scanner had performed correctly. The triage infrastructure around it had not been designed to exist.

What Patel built in response, an open-source AI-powered Docker security scanner called DockSec, became an OWASP Incubator Project in July 2025 and has since been deployed by developers in more than 40 countries. But the more consequential output of that engineering work is the diagnostic it produced: a precise account of why organizations running fully functional detection tooling continue to ship vulnerable containers into production, and what the structural conditions are that allow known vulnerabilities to remain unpatched for weeks inside environments whose compromise would carry consequences far beyond the organizations themselves.

The Triage Gap and Its Strategic Dimensions

The problem Patel set out to solve has a technical name in practitioner circles, alert fatigue, but that framing understates what is actually happening at the organizational level. Alert fatigue implies a volume problem. What Patel's post-incident analysis revealed was a design problem: the security tooling ecosystem had been built to optimize for detection coverage, which meant producing comprehensive output that documented every possible finding regardless of whether the receiving developer had any mechanism for distinguishing a critical exploitable vulnerability from a theoretical risk in a library their application never called. The result was not that developers were overwhelmed. It was that the reports had been made structurally unactionable by the very design choices intended to make them thorough.

"The scanner had done everything right," Patel reflects. "The failure was in what happened after the scan, and I realized that nobody had actually designed that part of the workflow with the developer in mind. The reports were built to be comprehensive, not actionable, and those are very different things when you are trying to ship securely under time pressure." That distinction carries strategic weight that extends well beyond delivery timelines. The window between a vulnerability being catalogued and a developer having a clear, actionable path to remediation is precisely the interval that sophisticated threat actors are positioned to exploit, and the width of that window is determined not by the quality of the detection tooling but by the organizational and design infrastructure that sits downstream of it.

Before writing a single line of code, Patel mapped the existing tool landscape and found it cleanly divided along a line that described the gap. Tools built for security operations centers produced rigorous, comprehensive output that required security expertise to interpret and prioritize. Tools oriented toward developer experience were accessible but analytically shallow, insufficient for the environments where container security failures carry real consequence. Neither category had been designed around the specific problem of translating security findings into prioritized, context-aware remediation guidance for an engineer operating under delivery pressure without a dedicated security background. That translation layer, Patel concluded, was where the structural exposure lived, and it was the layer no commercial vendor had built because it was not the layer that procurement conversations rewarded.

"Every tool I evaluated was solving for completeness. But completeness without prioritization is just noise with better documentation." — Advait Patel

"Every tool I evaluated was solving for completeness," Patel explains. "They wanted to surface every possible finding so that nothing could be missed. But completeness without prioritization is just noise with better documentation. I needed something that would tell a developer what to fix today, not what to worry about eventually." The observation is an engineering insight, but it is also a policy one: an industry that measures security tooling quality by coverage rather than by remediation outcomes will keep producing tools that detect vulnerabilities reliably and fail to prevent breaches systematically.

Architecture as Argument

DockSec's technical architecture is itself a position on where the triage gap lives. The tool integrates three established scanning instruments, Trivy for CVE detection, Hadolint for Dockerfile configuration linting, and Docker Scout for dependency chain analysis, and applies a GPT-5 analysis layer across their combined output. The critical design decision is not the AI integration itself but what the AI layer is asked to do: correlate findings across scanners, weight them by exploitability and deployment context, generate plain-language explanations calibrated to a developer audience, and produce a single 0-to-100 security score rather than a list of findings. Detection is delegated to the established tools. The AI layer handles the translation problem that those tools were never designed to solve.

The security scoring system deserves specific attention because it produced a behavioral outcome Patel had not predicted from the design stage. A numerical score that could improve with each remediation cycle created re-scan behavior that no other feature of the tool generated. Developers who received a score of 58 after an initial scan and watched it move to 74 after addressing the top three findings engaged differently with the security workflow than developers who received the same information presented as a static list. "The score was not a product decision," Patel says. "It was a behavioral one. The same vulnerability presented as a line in a report and as a reason your score dropped from 72 to 58 produces completely different responses, and the response is the only thing that actually matters for security outcomes." That insight has implications for how security tooling is evaluated: a tool that produces worse detection coverage but better remediation rates is a more secure outcome than a tool that detects comprehensively and remediates rarely.

Digital Sovereignty and the Scan-Only Statistic

Forty percent of DockSec deployments run in scan-only mode, bypassing the GPT-5 analysis layer entirely and using the tool purely as a unified scanner orchestration interface. That figure is worth pausing on, because it is not primarily a story about cost sensitivity or AI skepticism. It is a story about data residency, regulatory constraint, and the emerging logic of digital sovereignty in security infrastructure.

Organizations operating in defense-adjacent industries, financial services under prudential regulation, and healthcare systems subject to cross-border data governance frameworks increasingly cannot send production Dockerfiles to external API endpoints regardless of how compelling the analytical output is. The European Union's NIS2 Directive, which came into force in October 2024 and extended mandatory cybersecurity requirements to a significantly broader range of critical infrastructure operators, creates explicit obligations around the security of data processed in third-party systems that apply directly to the kind of external API call that DockSec's AI layer requires. Organizations in scope for NIS2, and equivalent frameworks in other jurisdictions, face a regulatory calculus that frequently resolves in favor of reduced analytical capability over compliance exposure.

DockSec's 2026 roadmap includes support for local LLM providers including Llama and Mistral via Ollama, which addresses this constraint directly. But the significance of that roadmap item is not primarily technical. It represents an acknowledgment that security tooling which depends on cloud-based AI infrastructure is structurally inaccessible to a substantial portion of the organizations whose container environments carry the highest consequence if breached. Air-gapped deployment capability is not a feature for edge cases. It is a prerequisite for the tooling to reach the environments where the triage gap is most strategically significant, and the fact that forty percent of current deployments already bypass the AI layer suggests that the demand for sovereignty-compatible security tooling significantly exceeds what the commercial market has positioned itself to serve.

Open Governance as Critical Infrastructure Accountability

DockSec's acceptance as an OWASP Incubator Project in July 2025 established a governance framework around the tool that has implications beyond project credibility. OWASP acceptance requires transparency about what a tool does, the boundaries of its analytical claims, and the conditions under which its output should not be trusted. For a tool that uses AI to make prioritization recommendations about security findings in production environments, that transparency requirement is not procedural overhead. It is the minimum viable condition for trust inside security teams whose mandate includes protecting infrastructure whose failure would carry consequences across organizational and jurisdictional boundaries.

"OWASP acceptance meant that the tool had to be transparent about what it does, how it does it, and what its limitations are," Patel explains. "That is not just good governance. It is the only way to build trust with security teams who are rightfully skeptical of any tool claiming to use AI to make security decisions. You cannot ask people to trust a black box with their production infrastructure." That argument becomes considerably more urgent when the production infrastructure in question is not organizational IT but shared critical systems whose security posture affects populations and institutions well beyond the organizations operating them.

"You cannot ask people to trust a black box with their production infrastructure." — Advait Patel

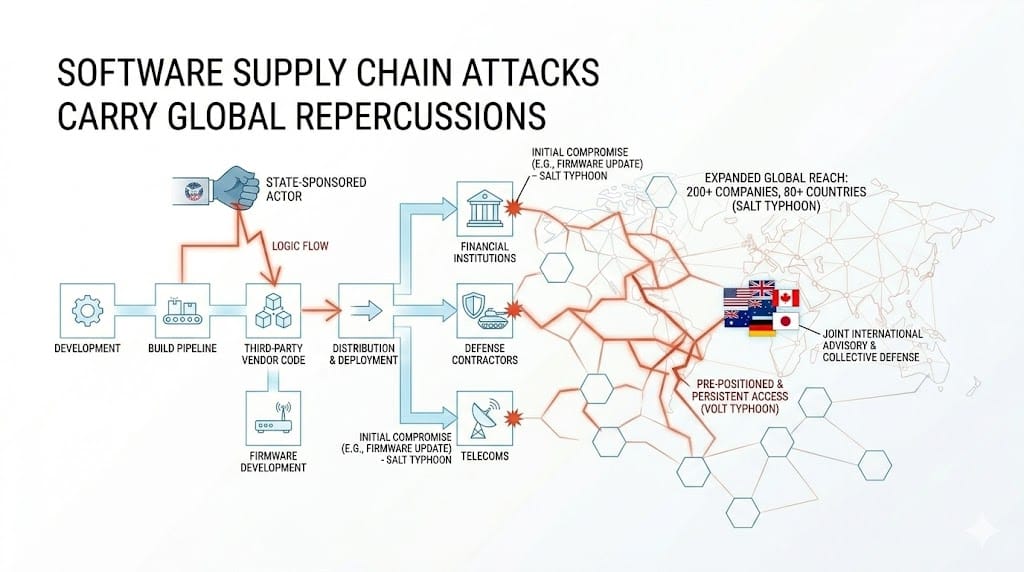

Container infrastructure now underlies financial clearing systems processing trillions of dollars in daily settlement volume, healthcare platforms holding patient records across multiple national jurisdictions, energy management tooling operating inside utilities that serve tens of millions of people, and communications infrastructure whose compromise has been a documented objective of state-sponsored operations across multiple recent campaigns. The security of that infrastructure is not governed by any single regulatory framework, is not addressable by any single vendor's product roadmap, and does not respect the organizational boundaries within which commercial security tooling is typically evaluated and procured. Open-source security tooling governed by a community rather than a commercial entity is one of the few accountability mechanisms that can operate across those jurisdictional and organizational boundaries without depending on a single vendor's incentive structure to determine what gets fixed and when. DockSec's MIT license and public development process mean that any organization, regulator, or security researcher in any jurisdiction can inspect the tool's logic, identify its failure modes, and contribute corrections back to the community without waiting for a vendor's quarterly release cycle or commercial prioritization decision.

That model of accountability is not unique to DockSec. It is the underlying logic of the open-source security tooling ecosystem, and its strategic significance has grown in direct proportion to the depth of container infrastructure's integration into systems whose failure carries consequences the commercial security market was not designed to price. The geopolitical and systemic dimensions of what community-governed security tooling can and cannot guarantee across critical infrastructure boundaries remain significantly underexamined in both the policy and technical literature, and that gap is one this publication intends to return to directly.

[See: Container Infrastructure Is a Shared Global Attack Surface]

What the Community Revealed

The most consequential lessons DockSec produced as a research artifact came not from the design process but from the community of practitioners who adopted it and then documented, through usage patterns and direct feedback, the distance between what Patel had assumed about the triage problem and what the problem actually looked like from inside the organizations trying to solve it. That distance was, in several places, significant enough to require a revision of the tool's core design assumptions.

The scan-only adoption rate was the first and most structurally important finding, not because it indicated that the AI layer was failing but because it indicated that the organizational preconditions for using AI-assisted security tooling were absent in a much larger proportion of deployments than the tool's design had anticipated. The second finding concerned the character of the guidance developers actually needed from the AI layer. Patel had designed the explanatory output to be action-oriented, giving developers specific remediation steps without requiring them to understand the underlying vulnerability mechanics. Community feedback revealed consistently that developers wanted the explanatory layer to answer a prior question: why is this a vulnerability, and why does it matter in the context of what this container is actually doing. Explanations that answered that question produced different engagement patterns and different remediation outcomes than explanations that answered only the procedural question of what to change.

"I built DockSec assuming the AI explanations would be the thing developers valued most," Patel reflects. "What I learned was that the score was what changed behavior, and the explanations were what built trust over time. Those are different problems and they needed different design thinking. I would have missed that entirely if the tool had not been open source with an active community telling me what they were actually experiencing." The implication extends beyond this specific tool: the security tooling that will actually move remediation rates is not the tooling with the most sophisticated detection capability but the tooling built close enough to real practitioner experience to know which part of the problem its sophistication needs to address.

The Strategic Case for Practitioner-Built Infrastructure

The DockSec roadmap for 2026 includes Docker Compose support for multi-container application analysis, Kubernetes manifest scanning for pod security policies and role-based access control configuration, and the local LLM support described above. Each addition addresses a specific failure mode that the container security ecosystem has not resolved through commercial means, and each reflects the same design logic that produced the tool in the first place: the practitioners who have been inside the failure, who understand both what the security output requires and what the development workflow can absorb, are better positioned than commercial vendors to design the translation layer that closes the gap between them.

That is not an argument against commercial security tooling. It is an argument about the structural limits of commercial incentives in security infrastructure markets where the organizations most exposed to consequence are frequently the organizations least able to influence vendor prioritization through procurement leverage. Community-governed open-source tooling operates outside those incentive constraints, which gives it a specific and underappreciated role in the broader security ecosystem: it serves the environments where the stakes are highest and the commercial market is least well-positioned to respond, governed by transparency requirements that commercial products are not subject to, and accessible across jurisdictions that commercial licensing and data governance requirements would otherwise exclude.

"The goal was never to build a company," Patel says. "It was to build the tool I needed in that moment in late 2024 and make sure nobody else had to spend three weeks debugging a 47-page report to find the three things that actually mattered. If DockSec does that reliably and stays open source and keeps improving through community contribution, that is the outcome I was building toward." The triage gap that Patel identified in that production incident is not a problem that will be closed by better detection tooling or larger security budgets. It is a design problem in the infrastructure layer of organizations running workloads whose compromise is a documented strategic objective of state-sponsored actors operating across jurisdictions that no single commercial vendor is positioned to address. Practitioners who understand both sides of that problem and are willing to build in public are not filling a market gap. They are performing a function the market was not designed to perform.