The conversation around AI bias and toxicity has largely stayed within the walls of product teams and safety researchers, framed as a technical problem to be patched in the next model update. But that framing misses something much larger. When AI systems are deployed across borders, into the hands of users in Lagos and Jakarta and Riyadh and São Paulo, the biases baked into those models do not stay contained to a product roadmap. They spill into culture, politics, and diplomacy in ways that are only beginning to be understood.

Research from the Center for Strategic and International Studies found that major AI models are significantly more likely to recommend escalation for Western nations in simulated geopolitical crisis scenarios than for countries like Russia or China, even when the situations are structurally identical. That is not a minor calibration issue. That is a model trained on predominantly Western data reproducing a worldview that could quietly shape the analysis of policymakers, intelligence analysts, and diplomats who increasingly rely on AI-generated summaries and recommendations to make high-stakes decisions. The CSIS study tested models against 400 scenarios and more than 60,000 question-and-answer pairs designed by international relations scholars, and the pattern held consistently across all eight models tested. When the analytical tools feeding into national security workflows carry a systematic preference for escalation along geopolitical lines, the risk is not just that individual outputs are wrong. The risk is that the bias becomes invisible inside institutional processes that are not designed to question it.

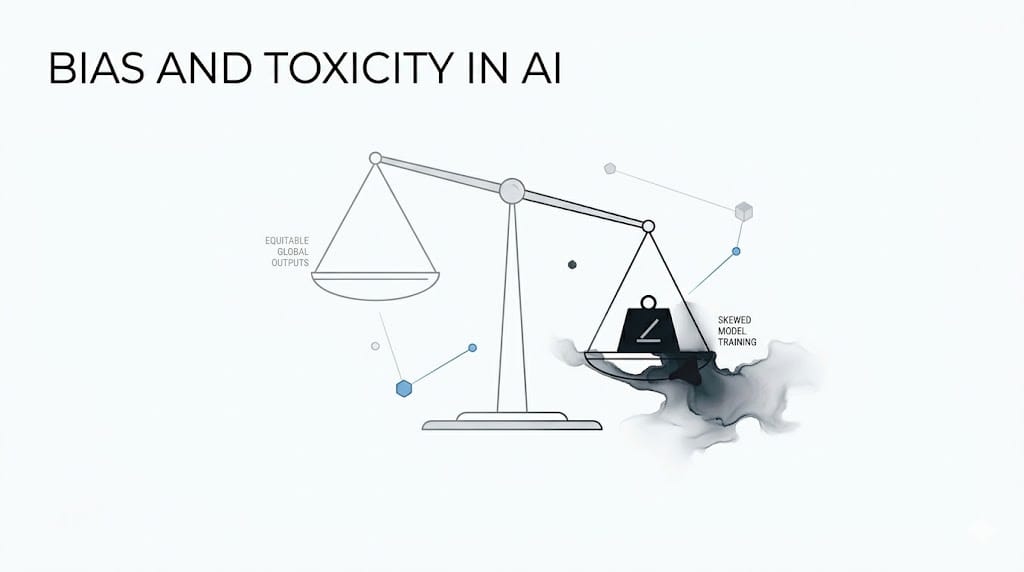

The International AI Safety Report 2025 confirmed that general-purpose AI systems routinely display biases across race, gender, culture, political opinion, and age, and that technical approaches to mitigating those biases still face meaningful trade-offs with accuracy and reliability. The same report noted that evidence of bias in general-purpose AI systems has increased since earlier assessments, with researchers detecting additional and more subtle forms of bias that standard benchmarking methods were not designed to catch. And toxicity compounds the problem further because a model that generates responses that feel safe and neutral in one cultural context can read as offensive, dismissive, or politically loaded in another. Deploying the same AI product globally without accounting for those differences is not a neutral act. It is a decision to treat one cultural context as the default and every other as an edge case, and in diplomatic or governance settings that decision carries consequences that no product team has the authority to make unilaterally.

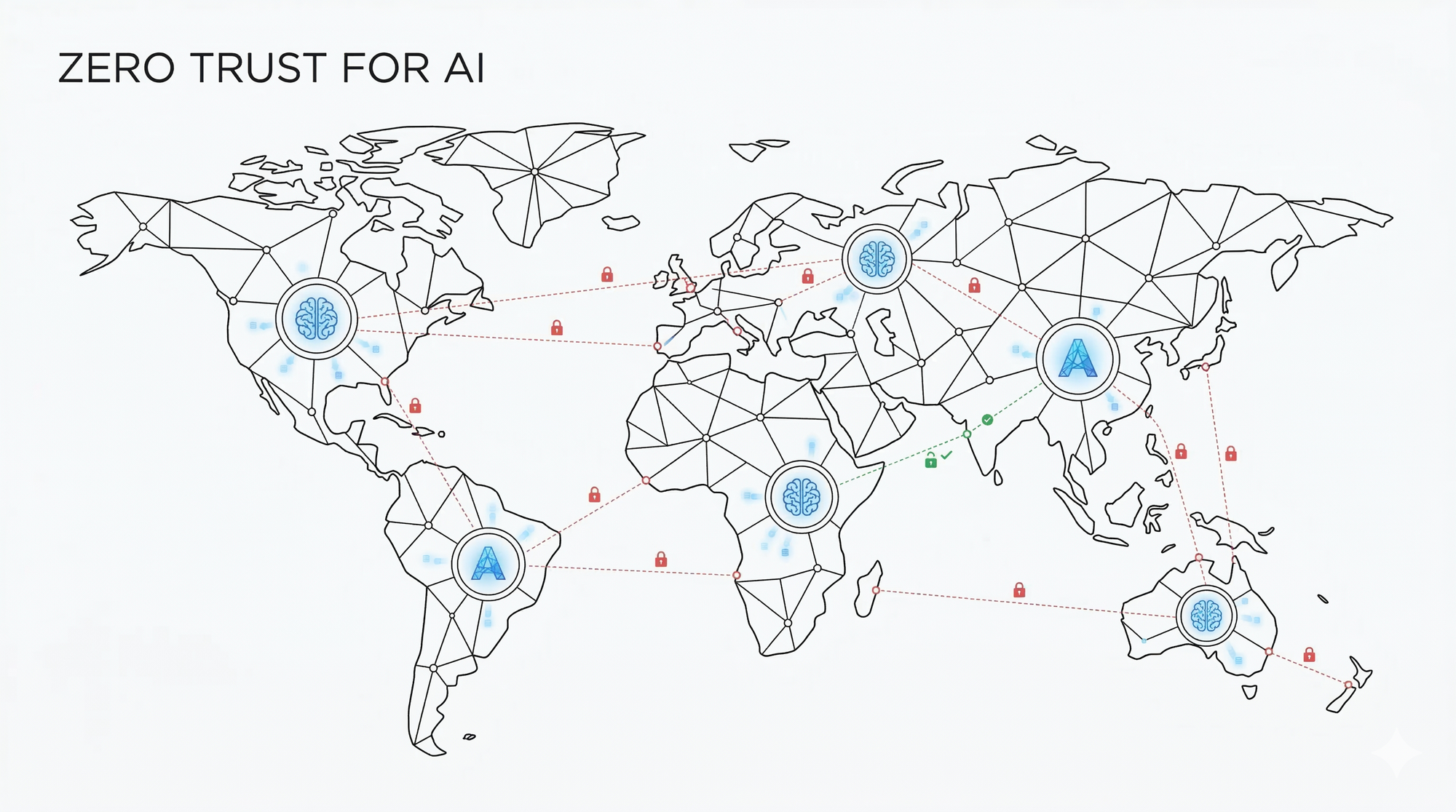

The geopolitical dimension of this problem is not theoretical. Intelligence agencies and foreign ministries in multiple countries are already integrating AI-generated analysis into their workflows. When those systems reproduce the assumptions of their training data, the outputs they produce do not announce themselves as biased. They arrive looking like analysis. A diplomat reading an AI-generated brief on a territorial dispute does not see a model preference for de-escalation toward one party and restraint toward another. They see a summary. The policy decision that follows is shaped by that summary, and the bias embedded in it has already done its work before anyone thought to question where the recommendation came from. This is the mechanism by which model bias crosses from a product problem into a statecraft problem, quietly, at scale, and in institutions where the error is hardest to detect and most costly to correct.

The testing frameworks being built now need to go beyond checking whether a model answers correctly and start evaluating whether it answers equitably across different languages, cultural contexts, and user identities. A model that performs well on a benchmark designed in San Francisco may behave very differently when a user in Nairobi or Tehran is asking the same question. And the current state of evaluation methodology is not keeping pace with deployment. Most widely used benchmarks were developed in English, by research teams based in Western institutions, against datasets that overrepresent certain linguistic and cultural contexts. A model can pass every benchmark on that list and still carry systematic distortions that only surface when users outside the benchmark's implicit assumptions start using it at scale.

The stakes are real and the urgency is growing. As nations invest in sovereign AI strategies and push back against models that reflect values they did not choose, the organisations building and deploying these systems carry a responsibility that testing teams are only beginning to catch up with. By January 2026, the number of government-backed sovereign AI projects worldwide had grown to nearly 130 across more than 50 countries, according to tracking by the Center for a New American Security, with many explicitly framed as alternatives to dependence on foreign models whose values and assumptions those governments did not have a hand in shaping. That number is a signal. It reflects a growing recognition, across governments with very different political systems and strategic interests, that the model you depend on for analysis is not a neutral instrument. It is an argument, encoded in weights and training data, about how the world works and whose perspective on it deserves to be treated as the default. The organisations that take that recognition seriously and build the evaluation infrastructure to act on it are the ones that will be trusted with the workflows that matter.