API sprawl does not come to light as a discrete event that a single governance decision could have prevented. It accumulates through the ordinary mechanics of software development at scale, where teams build endpoints to solve immediate integration needs, product cycles move faster than documentation practices, and organizational visibility into what has been deployed consistently lags behind the rate at which new endpoints are created.

A microservices architecture that begins with a small number of well-governed service interfaces tends to expand as teams decouple functionality, version endpoints to maintain backward compatibility, and promote experimental services to production before any formal security review has taken place. Deprecated endpoints frequently remain active because decommissioning requires coordination across teams that are absorbed in current delivery priorities, and the result is that production environments accumulate endpoints that security teams never reviewed alongside endpoints that no longer have any engineer maintaining them.

Shadow APIs typically emerge when individual teams create integrations directly rather than routing through a formal review process, often under deadline pressure or during prototyping work that gets absorbed into production infrastructure without triggering the review cycle that a net-new deployment would have required. Zombie APIs persist because removing them requires someone to take explicit ownership of that action, and in environments where API ownership is distributed across teams without centralized tracking, that ownership is frequently unclear to everyone involved.

The cumulative effect is an API inventory where authentication and authorization configurations vary widely across endpoints built at different times by different teams under different security standards, where security teams lack complete visibility into what is actually running, and where the attack surface expands continuously through a process that has no natural limiting mechanism as long as development velocity is increasing.

That was the state of API governance before agentic AI arrived, and the security implications of that state, from data exfiltration through zombie endpoints to compliance exposure through undocumented data flows, have been documented in operational detail across organizations at every scale. What autonomous agents have done to that already ungoverned landscape is not simply add more endpoints to the inventory. It has introduced a qualitatively different category of API creation and consumption that existing governance frameworks have no mechanism to address.

Agentic AI did not introduce new categories of API risk. It gave the existing ones the ability to compound autonomously, at a pace no human-led governance process was designed to track.

An Entropy Explosion, Not Just an API Explosion

The conventional framing of AI's impact on the API landscape focuses on velocity, more APIs created faster by more developers using tools that generate plausible output without enforcing quality standards. That framing captures part of what is happening but misses the more consequential shift that agentic AI introduces.

Chetan Conikee, Founder and CTO at Qwiet AI, identifies that shift with a precision the velocity argument alone cannot capture. "This isn't just an API explosion," Conikee argues. "It's an entropy explosion. AI is introducing loosely governed endpoints that are poorly documented and often invisible to traditional discovery mechanisms." In environments where agents are scaffolding endpoints on the fly, generating service layers, and composing backend logic as part of orchestrated tasks, those endpoints are not the product of a developer making a conscious architectural decision. They are byproducts of an agent completing a task, and they enter the production environment without ever passing through the documentation, review, or registration processes that human-initiated API creation nominally requires.

Ankit Awasthi, Director of Engineering at Twilio, identifies the mechanism that makes agentic API creation structurally different from the shadow IT problem that preceded it. What used to be linear workflows between services is now a dynamic orchestration layer where agents select and call APIs at runtime, and platforms like MCP are accelerating that shift by enabling agents to collaborate, invoke APIs across domains, and construct new capabilities in real time without any human directing each individual decision in that chain.

"These APIs may perform critical tasks," Awasthi notes, "yet lack observability, access controls, or formal ownership. And when AI agents chain APIs together, any weak link introduces compounding risk, especially in production-like environments." The shadow API that a human developer failed to decommission was a static risk sitting in an undiscovered inventory. The shadow API that an agent created as a side effect of an orchestration task is a live endpoint performing real functions inside a system that has no record it exists and no team assigned to its security posture.

The pressure to build APIs for agent consumption rather than developer consumption is also creating a new category of endpoints designed around the machine-readable contract requirements that autonomous models need to reason and act effectively, and those endpoints are being created at a pace that outstrips the governance infrastructure around them because the organizational processes for reviewing and registering new APIs were built for a world where a human made a deliberate decision to create each one.

The Vibe Coding Risk When Agents Are the Consumer

The concern about AI-assisted vibe coding, where developers accept generated code suggestions without deeply understanding their architectural implications, has been widely framed as a code quality and developer accountability problem bounded by whatever judgment the human brings to the review process. Cezar Grzelak, CSO at Versos AI, identifies the more structurally serious version of the problem with a precision that the developer-focused framing misses entirely.

"AI systems don't possess context, intent, or experience," Grzelak argues. "That means they can't reason about trade-offs like a senior developer would. Two endpoints may look similar syntactically but require completely different authentication rules based on downstream system behavior or regulatory exposure. If that distinction is missed, it leaves us with a vulnerability hidden inside something that looks right. The real risk is not that AI generates destructive code. It generates plausible code that no one owns or questions."

Eric Barroca, Founder and CEO at Vertesia, identifies the dimension of this problem that becomes critical specifically in an agentic context, and it inverts the common assumption that AI tools primarily create risk for junior developers who lack the judgment to evaluate generated output. "While GenAI tools are formidable for helping less experienced developers learn and accelerate development," Barroca notes, "paradoxically these tools tend to be most powerful in the hands of senior developers who know how to properly instruct, control the AI, and can anticipate how the generated code will impact the wider system and business architecture."

The governance gap in AI-assisted development is therefore not primarily a skills problem. It is an accountability problem, and it becomes an architectural problem when the consumer of a vibe-coded API is not a senior developer who can recognize an authentication misconfiguration before it reaches production but an autonomous agent that will use the interface however it can if no explicit authorization constraint prevents it.

Before an agent can act on an API it has not encountered before, it has to reason about the shape of the contract from the schema alone, and APIs that rely on implicit parameter relationships or external documentation to communicate constraints give the agent no mechanism for understanding those constraints before it violates them. Srinivasan Sekar and Sai Krishna of TestMu AI encountered this failure mode in production, where a test environment configuration API that developers found flexible and functional produced a 40 percent agent failure rate because the agents could not interpret the implicit rules that human developers had always navigated through external documentation.

Awasthi names the operational consequence directly. When AI agents consume APIs generated through vibe coding, "agents will naively retry, misinterpret, or misuse APIs unless the interfaces are highly deterministic and safe for autonomous use," he explains. The design standards that experienced developers apply because they understand the downstream consequences of getting them wrong are precisely what vibe-coded APIs are most likely to lack, and agents have no mechanism for recognizing that absence before exploiting it at machine speed.

The Authorization Gap That Agents Expose

The security architecture of most API environments was designed around a human consumer interacting through an application interface, and that design assumption creates a specific and consequential gap when the consumer is an autonomous agent. Barroca identifies the mechanism with a precision that practitioners who have not yet worked directly with agentic deployments may not have encountered in practice.

"When a model finds and accesses a shadow AI API via a tool, it will find a way to use it if possible, bypassing the security if there's not a strong authorization layer on this API," Barroca explains. "When these APIs are accessed by users via user interfaces, you can put a layer of the security in the app, and users typically will not try to bypass those safeguards. With models, it's a completely different story. You need to design them in a way to give the right set of permissions, contextualized, so that the model can only do what it's allowed to based on the task, the user on behalf, and so on."

That distinction between application-layer security and API-level authorization is the structural gap through which agentic systems access functionality that was never intended to be available to autonomous consumers, and it exists in most enterprise API environments today because those environments were never designed with the assumption that the caller might be an agent operating at machine speed with its own permissions logic and no organizational context about why a particular endpoint should not be invoked in a particular situation.

Conikee proposes the most policy-relevant response available in the current practitioner literature and names it with a specificity that the regulatory conversation around agentic AI has not yet matched. "What's needed is a shift from static governance to dynamic alignment," Conikee argues, describing what he terms Protection-Level Agreements, negotiated policies that define acceptable behavior, risk thresholds, and operational expectations not just for humans but for agents and services operating within the same infrastructure. The concept treats an agent's API access the way a compliance framework treats a contractor's access to sensitive data, with explicit, contextualized, auditable permissions that define not just what can be accessed but under what conditions, for what purpose, and with what accountability trail attached, and that governance model does not yet exist as a recognized standard in most organizations.

What Building for Agents Actually Requires

The API security problem in an agentic environment cannot be resolved through governance frameworks alone, because part of the root cause is architectural and the architectural response requires a fundamentally different approach to how APIs are designed and described rather than simply how they are governed after the fact.

APIs designed for human developers carry implicit contracts, flexible multi-purpose endpoints, and deeply nested data structures that experienced developers navigate through familiarity and external documentation. An API's machine-readable contract needs to describe its business purpose, its prerequisites, and its potential side effects in the schema itself, not in a documentation page that an agent will never read, because an agent operating on that contract has no mechanism for resolving ambiguity outside of what the schema explicitly declares.

Sekar describes the organizational and engineering commitment that building for agent consumption actually requires at TestMu AI. "We must now build APIs optimized for consumption by machines, which requires a fundamentally different design philosophy than the one we use for human-centric development," Sekar explains. "This means favoring specific, single-purpose endpoints and defining all constraints explicitly within the schema itself." Exposing only what is absolutely essential for a given task at each endpoint reduces the blast radius of any authorization failure and limits the surface area that a misconfigured agent permission can reach.

The production evidence from TestMu AI makes the investment calculus concrete in a way that governance arguments alone cannot. After encountering the 40 percent agent failure rate on their human-optimized API, the team built a parallel AI-optimized API layer alongside their existing developer-facing API. "The AI version takes a test result object that was previously nested four levels deep and flattens it into a single-level structure with explicit relationship IDs," Krishna explains. "It tripled our schema size but cut agent processing time by 70 percent. The investment was significant, but necessary."

The behavioral contract versioning model that Sekar and Krishna developed from that experience connects directly to the Protection-Level Agreements framing that Conikee identifies as the governance response to the authorization gap. Rather than versioning APIs purely around syntactic changes to the schema, agents subscribe to a behavioral contract that guarantees consistent outcomes for a given input regardless of how the underlying implementation evolves, and new behavioral contracts are introduced with transition time when a change in agent-observable behavior is required. That model makes agentic API governance auditable in the way that traditional API versioning never was, because it makes the behavioral guarantee itself a first-class managed artifact rather than an implicit consequence of the schema version.

When the API Governance Gap Becomes a Global Problem

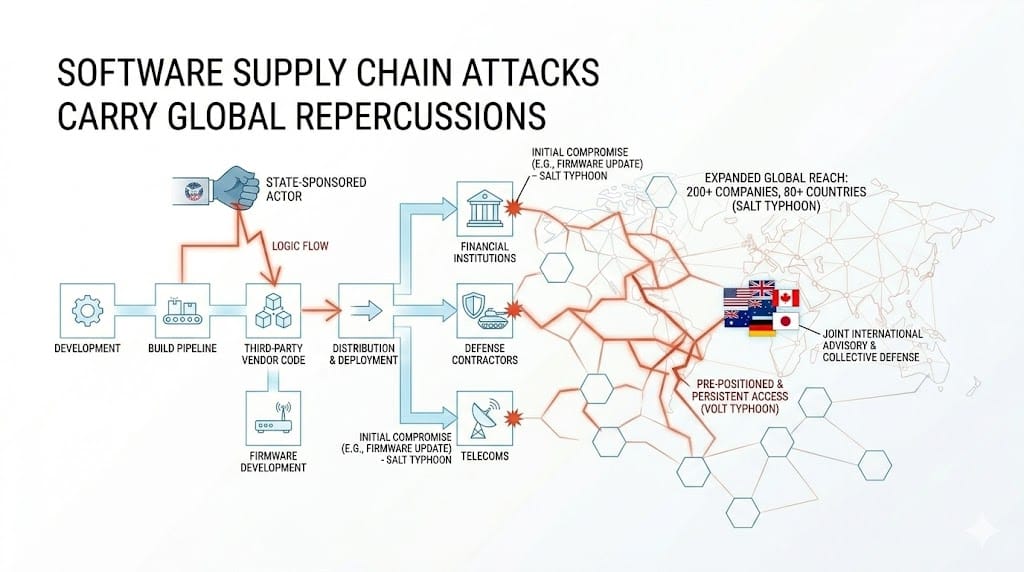

The governance failures described across the preceding sections are serious organizational security problems in isolation. They become strategic problems when you account for what APIs are actually connecting and where those connections cross organizational and jurisdictional boundaries simultaneously.

APIs are the integration layer of modern critical infrastructure across financial services, healthcare, energy, and government, and they operate across dozens of jurisdictions simultaneously, all subject to the same agentic consumption patterns, shadow endpoint risks, and authorization gaps that the contributors identify from their respective vantage points. When an autonomous agent chains together APIs from multiple organizations in a single orchestration task and one of those APIs is an undocumented endpoint with no authorization layer that the agent found because it was network-discoverable, the blast radius of that interaction does not respect organizational boundaries or the regulatory frameworks that govern each organization individually.

It propagates through the integration the agent constructed, across the data flows that integration touches, and into the systems of every organization connected to those data flows, and the organizations at either end of that interaction may have no visibility into what was accessed, when, or by what agent acting on whose authorization.

The container infrastructure running those API-connected applications compounds the exposure further, because a vulnerable container image propagates into every environment that built on top of it and that container is almost always running an application exposing its functionality through an API, meaning the container attack surface and the API attack surface are the same surface approached from different layers. [See: Container Infrastructure Is a Shared Global Attack Surface] Addressing the governance gap at one layer without addressing the other leaves the exposure structurally intact regardless of how much investment is directed at either layer individually.

Grzelak identifies the most strategically significant version of this risk, and it is one that the security community has not yet absorbed with the seriousness the operational evidence warrants. Beyond the risks of APIs generated through vibe coding without security review, he warns of realistic risks of malicious APIs being published deliberately to mimic legitimate vendor APIs, built and exposed purely to be picked up by agents during autonomous API discovery and orchestration sessions. "This could result in a well-meaning developer who vibe coded a small project unintentionally incorporating something that is potentially quite dangerous to its users," Grzelak argues, and in an agentic context that risk scales with agent adoption because agents selecting and calling APIs at runtime have no native mechanism for distinguishing a malicious API that presents a legitimate schema from the genuine endpoint it was designed to impersonate.

The open-source tooling that powers most agentic API infrastructure compounds that targeting risk further, because the dependency chains that agents traverse when discovering and calling APIs are publicly documented and the vulnerability disclosures against those dependencies are indexed in public CVE databases the moment they are filed, giving adversaries the same starting information as defenders with fewer organizational constraints on how fast they can move against it. [Read: Why Open Source Technologies Are a Preferred Target for State-Sponsored Actors] State-sponsored actors with the resources to conduct sustained supply chain operations have both the capability and the strategic incentive to target agentic API infrastructure in critical sectors, and the authorization gap that each of the contributors identifies from their respective vantage points is the structural condition that makes that targeting viable at the scale agentic AI now operates.

What Governance Looks Like When Agents Are in the Loop

The path from the current state of agentic API governance to something adequate to the risk requires changes at the architectural, organizational, and policy levels simultaneously, and the contributors are consistent on where that path begins regardless of which dimension of the problem their analysis addresses most directly.

"APIs are the primary interface between AI agents and the systems they operate," Conikee underscores. "If you don't have a complete, real-time view of your API ecosystem, then you have no idea what your AI systems can do or what they've already done." Visibility is the prerequisite for every other governance capability, and in an environment where agents are generating and consuming endpoints that were never entered into any inventory, achieving that visibility requires instrumentation at the infrastructure level rather than reliance on the documentation processes that human developers were already failing to follow consistently before agentic AI arrived.

Awasthi frames the organizational disposition that makes the governance work sustainable rather than reactive. "Treat APIs not just as code, but as operational contracts, especially for those used by agents," he argues. "That means defining clear interfaces, strong schemas, versioning, and guardrails that make them safe for autonomous invocation. Just like you'd secure a public-facing API, internal APIs used by agents now need similar discipline." In an MCP-enabled world where agents collaborate through APIs across organizational boundaries, applying public-API security standards to internal endpoints is the minimum viable posture for infrastructure that agents are already treating as externally accessible regardless of how it was originally classified.

Grzelak offers the most direct assessment of what the agentic era requires of organizations that already have API governance frameworks in place. "Retain and reinforce the processes already in place aimed at gatekeeping your API usage," he argues. "The proliferation of coding assist AI is akin to an explosion of junior tech workforce within the company ranks. More code will be generated more rapidly and vulnerabilities introduced via consumption and exposure of new APIs will require tools and practices that enforce the same checks and balances as before but at an even higher rate."

Sekar closes the argument from the engineering side with an observation that connects the architectural response to the governance imperative with a precision that neither framing achieves alone. "The companies building the most reliable agentic systems aren't necessarily the ones with the most sophisticated AI models," Sekar notes. "They're the ones who've done the hard work of redesigning their API foundations to speak the language machines understand." The governance problem that agentic AI creates is not a new problem requiring an entirely new framework. It is the existing API sprawl problem running faster and deeper than the existing governance infrastructure was built to handle, and the organizations best positioned to manage it are the ones that treat the architectural and the governance responses as the same problem requiring a coordinated answer.