Supply chain compromise now ranks third on OWASP's Top 10 for Large Language Model applications for 2025, sitting behind prompt injection at first and sensitive information disclosure at second. Alongside that classification, malicious package uploads to open-source repositories jumped 156% in the past year, according to Sonatype's annual State of the Software Supply Chain report.

But volume alone does not capture what has actually changed. And the more significant shift is in the geometry of the attack surface itself, and understanding it requires thinking beyond the artifact layer to every component that now participates autonomously in how software gets built, trained, deployed, and updated.

The Expanding Attack Surface

AI systems now touch the supply chain at multiple layers simultaneously, and each layer carries its own set of vulnerabilities that existing controls were not designed to catch.

Coding agents like Cursor, Claude, and GitHub Copilot Workspace operate as autonomous participants in the build process, selecting dependencies, executing steps, and committing changes at speeds no manual review process can match. And the attack surface they create is distinct from what artifact-scanning addresses. Prompt injection is the primary vector here, where malicious instructions embedded in code comments, documentation strings, or external data sources get processed by the agent as legitimate directives rather than content to be evaluated. Typosquatting compounds this at scale, with thousands of malicious packages identified in 2024 targeting AI libraries under names like openai-official, chatgpt-api, and tensorfllow, each engineered to catch automated processes that resolve package names without the contextual recognition that an experienced engineer would apply. An agent resolving dozens of dependencies in rapid sequence is a more reliable victim of this technique than a developer who might catch the discrepancy on inspection.

Agent-to-agent architectures extend the problem further. As organizations build pipelines where one AI system delegates tasks to another, the attack surface multiplies with each handoff. A compromised instruction passed from an orchestrating agent to a subordinate one can propagate changes across the entire workflow before any monitoring layer has the opportunity to flag unusual behavior. The subordinate agent executes with the same permissions as the orchestrator, and neither system carries the judgment to recognize that the instruction it received was not legitimate.

But beyond the build pipeline, the AI models that organizations are incorporating into their systems represent a distinct category of supply chain risk, one that has no direct equivalent in traditional software security. Pre-trained models can contain hidden backdoors or malicious features that safety evaluations fail to surface, because models are opaque binary artifacts that static inspection cannot fully characterize. LoRA adapters, which are widely used to fine-tune base models efficiently, introduce their own exposure. A malicious LoRA adapter merged with a legitimate base model can compromise the integrity of the entire system, and this has been demonstrated both in collaborative model merge environments and through popular inference deployment platforms like vLLM and OpenLLM where adapters can be downloaded and applied to a deployed model without requiring access to the base model itself.

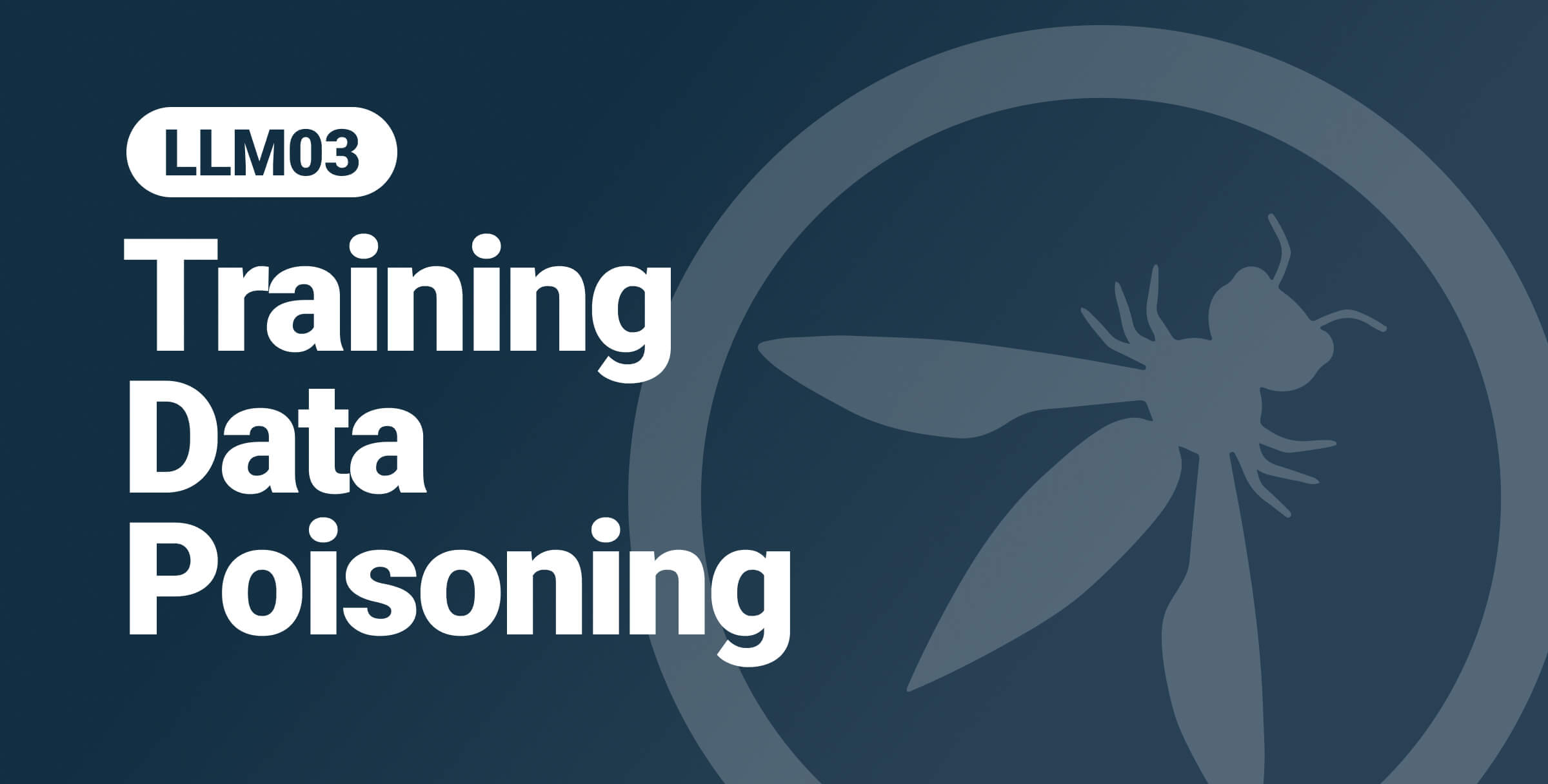

Data poisoning at training time operates earlier in the chain and is correspondingly harder to detect through post-deployment monitoring. Attackers who poison publicly available datasets can embed backdoors that activate only on specific inputs, meaning a model can pass conventional safety benchmarks while carrying targeted vulnerabilities that surface only under conditions the attacker controls. A model fine-tuned on a poisoned dataset inherits those vulnerabilities regardless of how carefully the fine-tuning process itself is conducted.

Model repositories represent the distribution layer through which all of this reaches production environments. Platforms like Hugging Face have been exploited through compromised publisher accounts, malicious format conversion services, and directly tampered model parameters, with one documented case demonstrating that an attacker could bypass the platform's safety features by modifying model weights directly. Because model cards and associated documentation carry no cryptographic guarantees about the artifact they describe, organizations downloading models from public repositories have limited ability to verify that what they are running corresponds to what was originally published.

What AI Changes About the Attacker's Toolkit

The attack surface expansion is compounded by parallel changes in what attackers can do with AI on the offensive side. AI-generated malware is polymorphic by default, with each instance structurally unique while maintaining the same underlying purpose, which makes signature-based detection unreliable across the board. More sophisticated variants include sandbox detection logic that waits for environmental signals of a real development context before activating. That patience is an architectural response to the detection tools organizations deploy downstream of the build process, and it means that the malware most likely to reach production is specifically designed to evade the controls positioned to catch it.

IBM's Cost of a Data Breach Report 2025 found that organizations take an average of 276 days to identify a breach and another 73 days to contain it. Against malware that lies dormant and mutates continuously, that detection window is long enough for an attacker to achieve significant objectives well before any response begins.

Google's Research About Provenance

Google's Secure AI Framework research identifies three structural differences between AI supply chains and traditional software supply chains that defensive architecture needs to account for. AI relies heavily on data rather than code, creating distinct security challenges around provenance, poisoning, and versioning that code management practices do not address. AI models are opaque in ways that software is not, making manual review impossible at scale. And the emphasis on provenance is more acute for AI because data poisoning and model tampering operate at a layer that traditional integrity verification does not reach.

The ecosystem for storing, changing, and retrieving datasets is less mature than that for code management, and tamper-proof provenance is the primary mechanism through which organizations can verify the identity of a model producer and confirm a model's authenticity. That gap is not a secondary concern, and it is the precise mechanism through which compromised models reach production environments without triggering the controls organizations believe are protecting them.

What Rigorous Defense Looks Like

The defensive architecture that addresses this landscape organizes around three areas that work in sequence rather than independently.

Establishing strict privilege boundaries means treating AI agents as privileged pipeline participants subject to the same identity and access controls applied to any system with production credentials. Agents should operate in sandboxed environments with controlled network egress, so that a manipulated agent cannot reach services or exfiltrate data through connections the pipeline was not designed to permit.

Trusted dependency controls through allowlists and hash pinning ensure that agents can only resolve package names against registries the organization has explicitly approved. Each dependency should be pinned to a specific verified artifact rather than a version string, because a version string can be satisfied by a different package while a hash cannot.

Continuous monitoring should feed agent activity into security operations infrastructure rather than a separate audit trail reviewed infrequently. Automated posture assessment against frameworks like ISO 27001, SOC 2, or NIS2 is replacing static vendor questionnaires in organizations that have recognized how quickly this threat landscape is moving. The same monitoring discipline should extend to model provenance, with cryptographic signing and artifact verification applied to any model incorporated into the pipeline regardless of its source.

The governance principle underneath all three approaches is the same one that should anchor the broader response. AI agents and AI models are privileged participants in the software lifecycle and need to be governed accordingly.